Text by: Chaudhary Muhammad Aqdus Ilyas - University of Cambridge

Lets’s begin our journey of integration from where we had left unfinished.

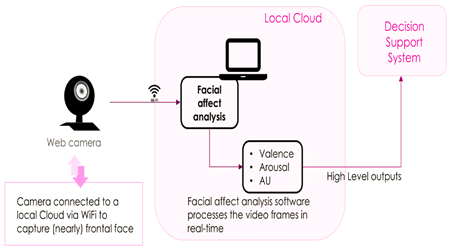

The user’s facial expressions and affect features are only recorded when the user is registered and connected to the sensor, i.e. Facial-Affect-analysis sensor, through the “sensor management” interface on the WAOW application. Facial-Affect-analysis subsystem informs WAOW tool about user’s facial expressions and affect information through analysis of their face and facial features.

Recognitions of emotions perform facial affect analysis through facial expressions (e.g. anger, fear, sadness, happiness, etc.); by the prediction of valence (how positive or negative the expression is) and the arousal (how active or inactive the expression is) values and by the detection of facial action units (AU) activations.

These values are passed as high-level messages to ZEROMQ with the time stamp and user pseudoID (generated with the user registration) to be displayed on the WAOW application and send information to Decision Support System (DSS) to assist in generating suggestions and recommendations for the user.

The framework of the WAOW Facial-Affect-analysis system

The WAOW tool monitors the well-being and health of the workers by assessing worker’s physical, physiological and emotional states during their work routine. DSS explores the integration of intelligent functionalities based on various sensors into WA tool to remunerate user’s declining physical and cognitive capabilities. DSS also assists the users by providing

reliable context-aware adopted feedback. In this article, we have discussed the main steps involved in the integration of Facial-Affect-analysis system to WA tool. Other sensors such as for monitoring stress, strain, or body gestures, more or less follow the same protocols, depending upon the nature of sensor’s input and data output.