Text by the Simulation Technologies Unit of ITCL: Carlos Alberto Catalina, Basam Musleh, Marcos García, Lydia Ramón, Alvaro Montesinos y Patricia Torre

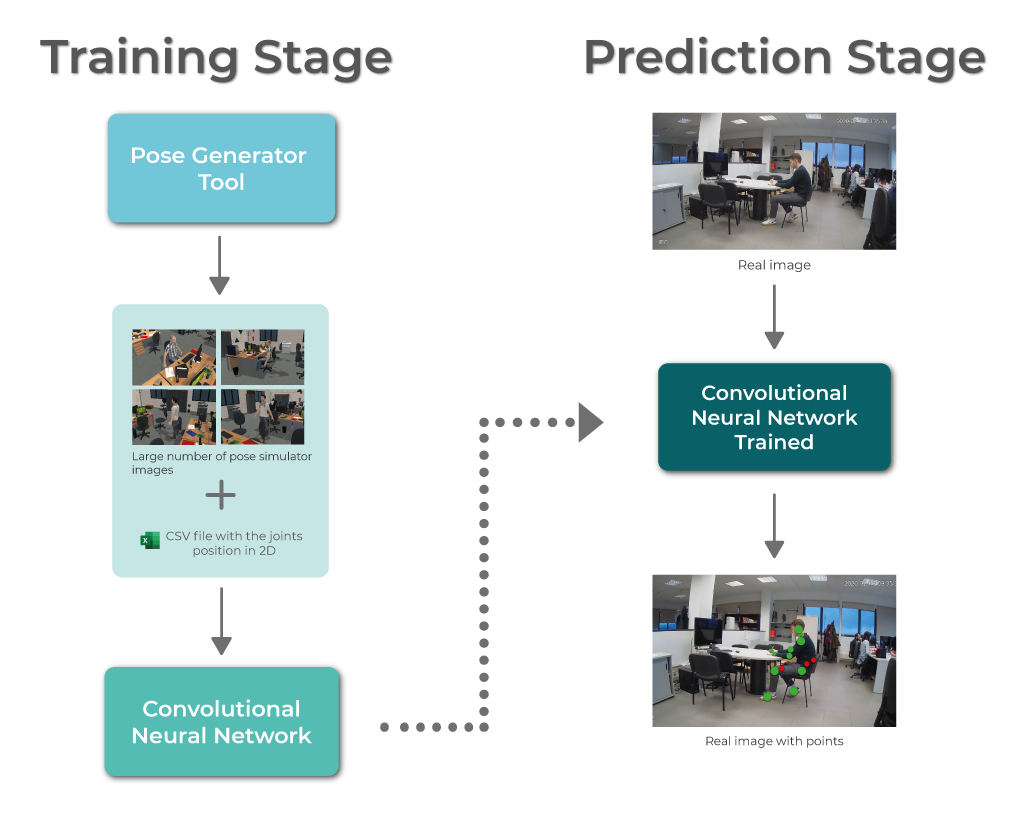

In order to assess the posture of workers at their workplace, we are estimating their posture by means of computer vision system, which allow us to estimate the position of the main joints of the body (see image below). Indeed, we can determine if each joint is visible or not in the image (green point = visible, red point = occluded). The computer vision system is based on Convolutional Neural Networks which require a huge number of examples in the training stage. For this reason, a Pose Generator Tool based on a virtual environment has been developed to generate a huge dataset of images with the ground truth information.

Following the diagram of the figure, the starting point is the simulation tool for different poses, where a large number of images are obtained with their associated 2D and 3D positioning data for each joint. For each image an avatar will appear, and the ground truth information is stored: the name of the image, the position in the image that locates each joint, and a coefficient that informs if that joint is visible in the image.

Once a large number of images have been collected, they will be used in the process of training a convolutional neural network that will be able to detect, in a real image of a user, each of his or her joints. In this way, it will be easier to analyze the posture that this person has during his working day.

The neural network used in the previous follows the steps of the Simple Baselines for Human Pose Estimation and Tracking (Xiao et al., 2018) survey, and will be tested using real images taken inside ITCL offices (prediction stage).

Diagram

Example images

References: Xiao, B., Wu, H., & Wei, Y. (2018). Simple baselines for human pose estimation and tracking. Lecture Notes in Computer Science (Including Subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics). https://doi.org/10.1007/978-3-030-01231-1_29